Introduction to Prompt Engineering and Agentic AI

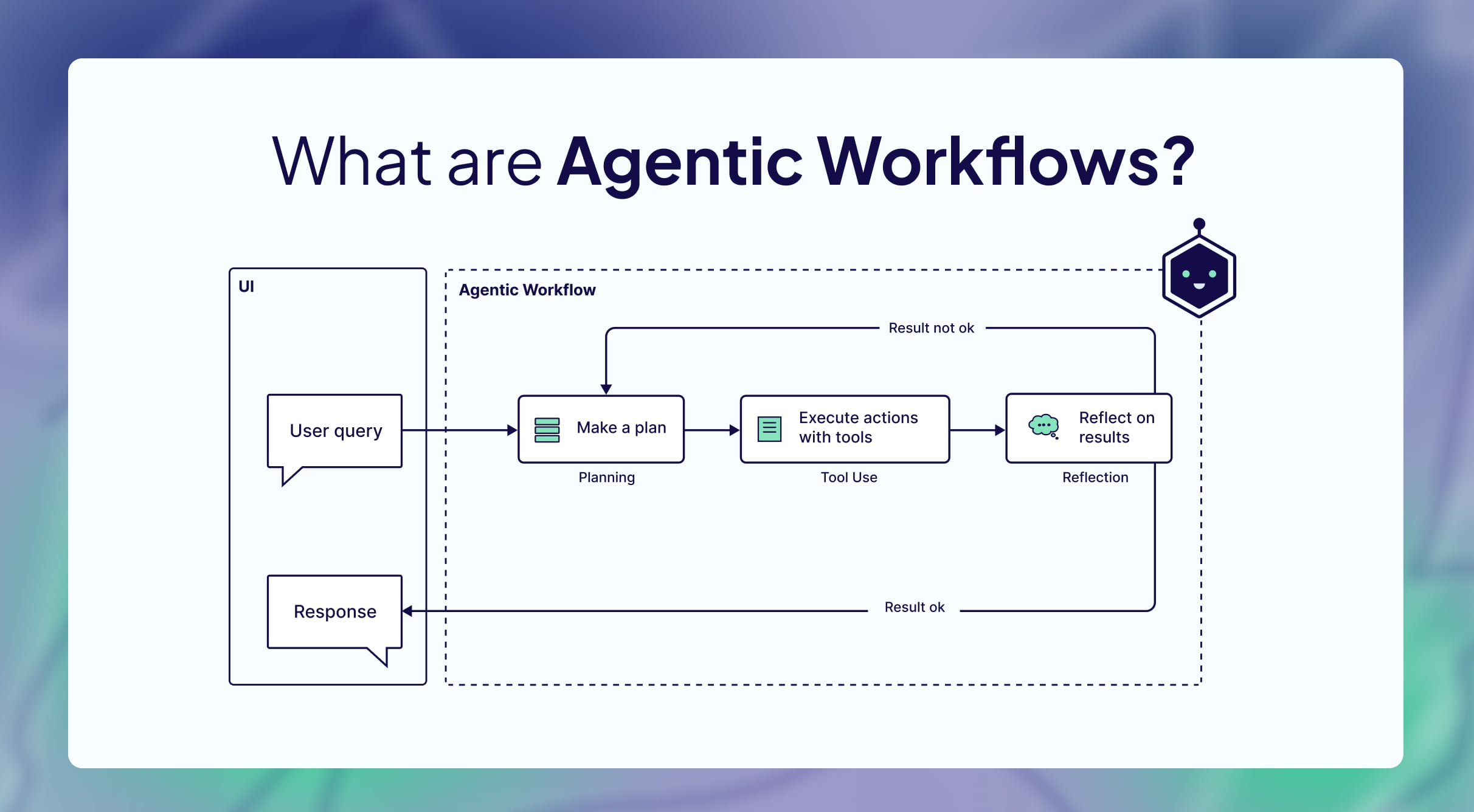

Prompt engineering is the process of designing and refining inputs to elicit desired outputs from AI models, particularly LLMs like those from OpenAI or Anthropic. It bridges human intent and machine execution, evolving from basic queries to sophisticated frameworks that incorporate context, examples, and constraints. For agentic AI—systems that exhibit agency by autonomously pursuing goals through planning, tool use, and reflection—prompt engineering becomes even more critical. Agentic AI represents a shift from reactive chatbots to proactive entities capable of handling complex workflows, such as software development or data analysis.

The synergy between prompt engineering for agentic AI and autonomous systems lies in enabling loops of observation, planning, action, and reflection. This not only improves efficiency but also addresses limitations like hallucinations or context overflow. As AI adoption grows, mastering these techniques is essential for building scalable, production-grade agents.

Defining Core Concepts

Prompt Engineering Basics:

At its foundation, prompt engineering for agentic AI involves clarity, specificity, and iteration. Prompts should use direct language, define roles (e.g., “You are a software architect”), and incorporate examples to guide behavior. Techniques like zero-shot (no examples), few-shot (limited examples), and chain-of-thought (step-by-step reasoning) form the toolkit.

Agentic AI Overview:

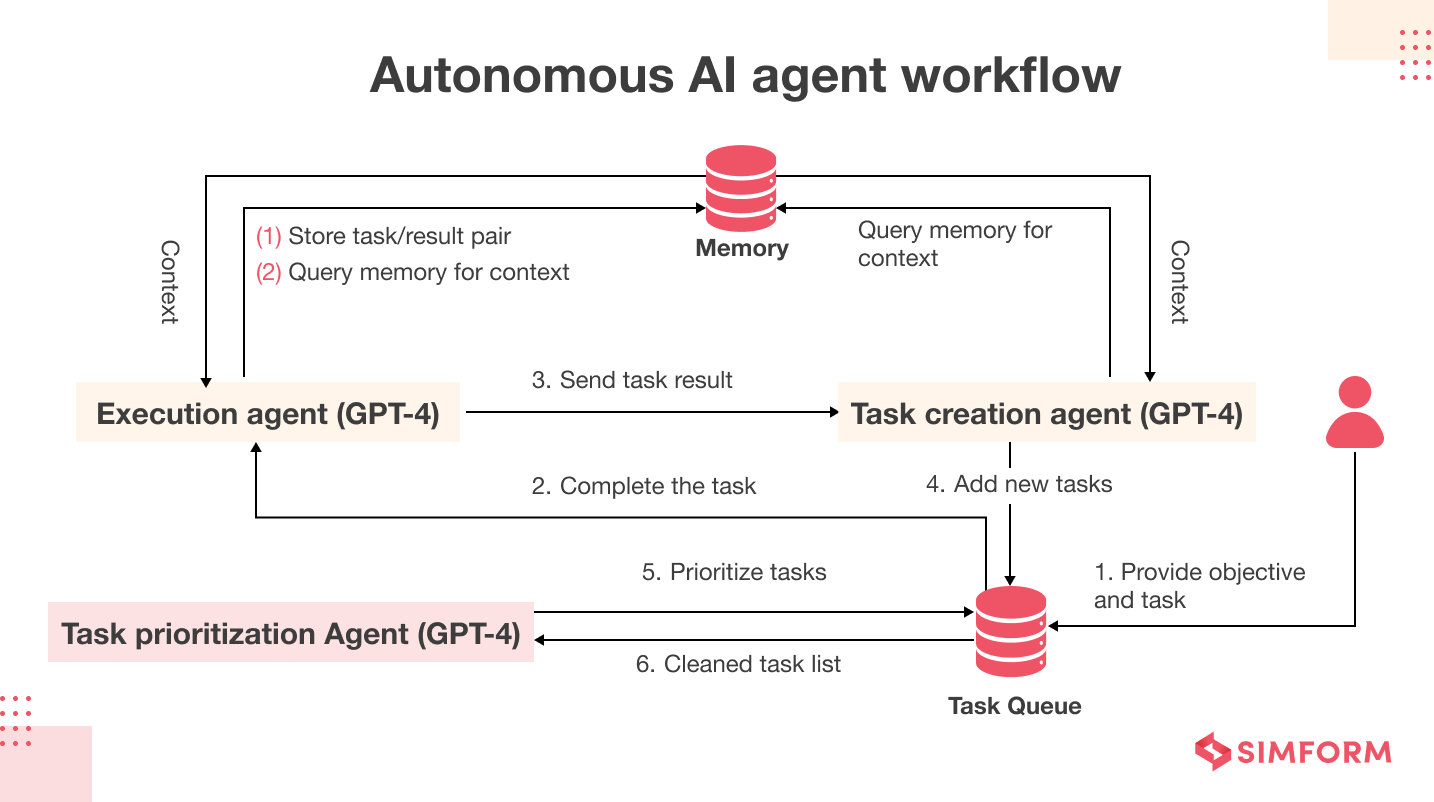

Agentic AI systems are autonomous entities that integrate LLMs with tools, memory, and reasoning mechanisms to achieve goals without constant supervision. Key components include:

- Planning: Breaking tasks into sub-steps.

- Tool Use: Interfacing with external APIs or functions.

- Reflection: Evaluating outcomes and adjusting.

- Memory: Storing context for persistence.

Unlike generative AI, agentic AI emphasizes outcome responsibility over mere response generation, making prompt engineering for agentic AI pivotal for reliability.

The following table summarizes key differences between traditional prompt engineering and prompt engineering for agentic AI:

| Aspect | Traditional Prompt Engineering | Prompt Engineering for Agentic AI |

|---|---|---|

| Focus | Single-turn responses | Multi-step autonomous loops |

| Key Techniques | Few-shot, CoT | Tool integration, context compaction |

| Primary Goal | Accurate output | Goal achievement with adaptation |

| Context Management | Static | Dynamic, with retrieval and summarization |

| Evaluation | Output quality | Task success and efficiency |

| Examples | Text summarization | Software debugging or workflow automation |

Essential Techniques in Prompt Engineering for Agentic AI

To build effective autonomous systems, employ advanced techniques that enhance reasoning and tool interaction. Here’s a detailed exploration:

- Chain-of-Thought (CoT) Prompting: Encourage step-by-step reasoning to improve accuracy on complex tasks. For agentic AI, extend to “tree-of-thought” for branching decisions. Example: “Think step-by-step: First, analyze the code error; second, propose fixes; third, test virtually.”

- Few-Shot and Role-Based Prompting: Provide examples and assign personas (e.g., “Act as a cybersecurity expert”) to align outputs with agent goals. In agentic contexts, this defines behaviors like “escalate if uncertain.”

- Tool Integration and Structured Formats: Use XML or JSON for tool calls, ensuring agents invoke functions precisely. Example from Cline agent: “<read_file>src/main.js</read_file>” for modular actions.

- Context Compaction and Retrieval: Summarize long contexts to avoid “context rot,” using just-in-time retrieval for efficiency. This is vital for autonomous systems handling large data.

- Iterative Refinement: Test prompts, refine based on evals, and use frameworks like DSP for optimization, reducing tokens by 50-80%.

- Output Anchoring and Constraints: Prefill structures (e.g., “Response: {summary: ”, actions: []}”) to enforce formats, aiding integration in agentic workflows.

The table below illustrates token reduction trends from prompt optimization in agentic AI projects, based on aggregated case data:

| Optimization Stage | Average Token Reduction (%) | Eval Score Improvement (%) |

|---|---|---|

| Initial Prompt | 0 | Baseline |

| CoT Integration | 20-30 | 10-15 |

| Tool Structuring | 30-50 | 15-25 |

| Compaction & Caching | 50-80 | 20-40 |

| Full Iteration | 70-90 | 30-50 |

Best Practices for Building Autonomous Systems

Implementing best practices in prompt engineering for agentic AI maximizes ROI and minimizes errors:

- Clarity and Specificity: Use simple, direct language; avoid ambiguity. Organize prompts into sections (e.g., ## Instructions, ## Tools) for better model comprehension.

- Minimalism with Iteration: Start with basic prompts, test on top models, and add elements based on failures. Keep it simple: “More words don’t always mean better.”

- Safety and Constraints: Include escalation rules (e.g., “If data missing, query user”) and guards against risks like biases or unsafe actions.

- Evaluation-Driven Development: Use “vibe checks” initially, then build evals for accuracy and efficiency. Prioritize single agents before multi-agent orchestration.

- Context Management: Employ compaction, sub-agents for isolation, and memory tools (e.g., NOTES.md) to handle long contexts without overload.

- Tool Design: Create non-overlapping, token-efficient tools; separate planning from action (e.g., PLAN vs. ACT modes).

For agentic AI best practices, the P.A.R.T. framework (Prompt, Archive, Resources, Tools) ensures structured efficiency. Always iterate: Refine prompts based on performance metrics.

Real-World Examples and Case Studies

- Cline Coding Agent: Uses a ~11,000-character system prompt with XML tool formats and iterative confirmation, enabling safe code edits in VS Code.

- Bolt Browser Agent: Employs concise prompts with artifact tags for holistic planning, ensuring modular code in constrained environments.

- BrainBox AI HVAC Optimization: Reduced tokens from 4,000 to 1,200 via prompt engineering, automating 30,000 facilities with simple agents.

- CloudZero Cost Analysis: Single-agent prompts handled daily reports; multi-agent added only for benchmarks, showcasing phased complexity.

In customer support, few-shot prompts classify tickets with 91% accuracy, demonstrating prompt engineering for agentic AI in production.

Challenges in Prompt Engineering for Agentic AI

Common hurdles include context limitations (e.g., attention budget depletion), hallucinations in long chains, and over-reliance on orchestration leading to high overhead. Security risks like prompt injections require defensive scaffolding. Solutions involve compaction, evals, and hybrid retrieval to maintain reliability in autonomous systems.

Future Trends and Implications

By 2027, agentic AI adoption is projected to dominate sectors like healthcare and finance, with prompt engineering evolving toward automated optimization and multi-modal integration. Ethical considerations, such as bias mitigation, will drive governance-focused prompts. As models advance, prompt engineering for agentic AI will focus on human-AI collaboration, ensuring autonomous systems are trustworthy and efficient.

In conclusion, mastering prompt engineering for agentic AI empowers the creation of transformative autonomous systems. By applying these techniques and best practices, developers can unlock unprecedented efficiency and innovation.