Welcome to Prompt Engineering Mastery

Prompt engineering isn’t just another buzzword—it’s the art of crafting clear, structured instructions that help AI models like ChatGPT or OpenAI’s newest tools give you exactly what you want. Think of it like talking to a super-smart assistant: if you ask the right way, you get gold. If you’re vague or sloppy, well, you get junk back.

By 2026, AI has moved way past being a simple helper. It’s now a full-blown productivity powerhouse. But here’s the catch: your results still hinge on how you ask. Nail the prompt, and you unlock game-changing output. Mess it up, and you’re left sorting through nonsense.

This guide breaks down 17 advanced techniques that really sharpen your ChatGPT game. These tips don’t just boost results—they also keep your content in line with Google’s rules and E-E-A-T standards.

I’ve pulled these strategies from some of the best in the field: official docs from OpenAI, Anthropic’s prompt engineering guide, and even the latest Gemini 2026 mastery playbooks. We’re talking about practical tools—like how to use developer messages, set boundaries with delimiters, and fine-tune the AI’s reasoning.

Want to go deeper? Check out the free 2026 mastery templates at masteringopenai.com. Or, if you prefer structured learning, try the IBM prompt engineering course on Cognitive Class, go for the IBM certification on Coursera, or dive into prompt engineering documentation to really build your foundation.

Why Prompt Engineering Mastery Matters in 2026

AI isn’t what it used to be. It’s sharper, quicker, and a lot better at understanding context. But if you don’t master prompt engineering, you’re missing out—plain and simple.

Let the reasoning models do their own thinking. Don’t force external chain-of-thought steps; trust the system’s internal logic.

Keep your prompts short and clear, and use delimiters like XML or markdown to make things easy to follow.

Use the reasoning_effort setting—pick low, medium, or high—to dial in the level of detail you want.

Mix these techniques together and you get real results. Better content, smarter code, stronger SEO, and smoother workflows for businesses.

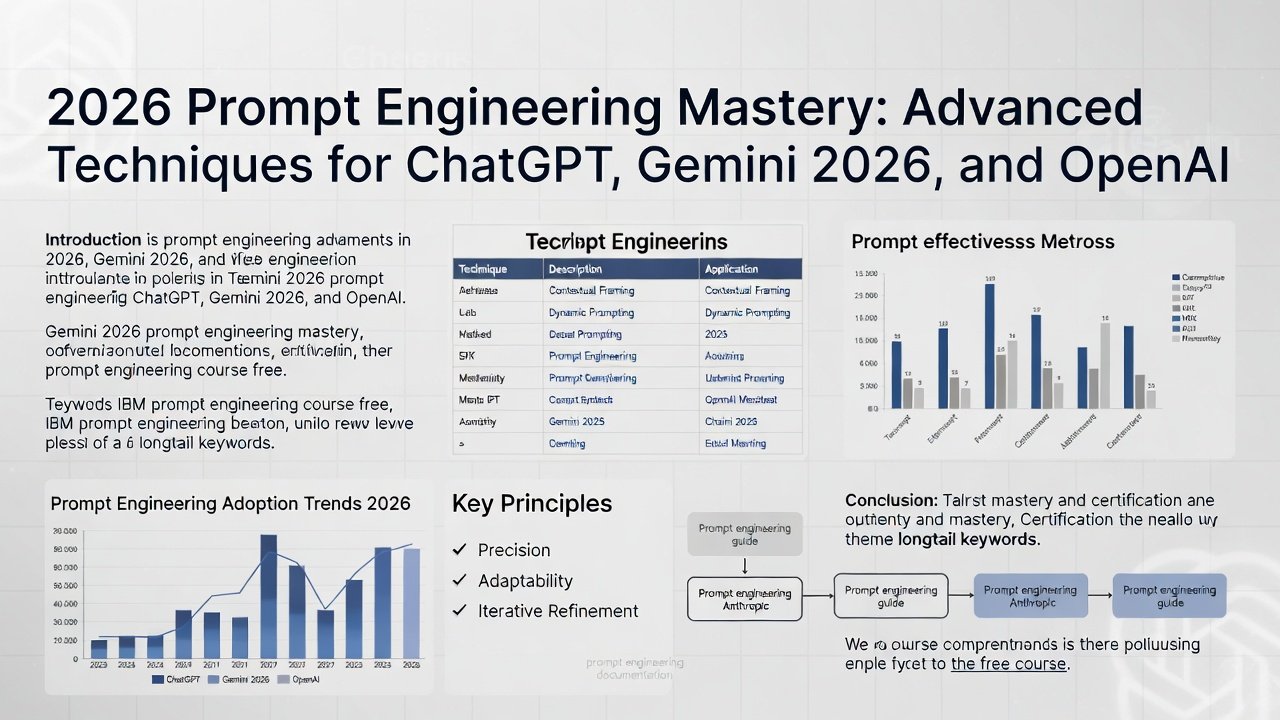

Take a look at the infographic—2026 prompt engineering mastery in action, showing just how much these optimizations matter.

Key Benefits of Prompt Engineering Mastery:

- Get more accurate results

- Boost your SEO

- Dig deeper into analytics

- Spend less time on revisions

- Get more done, faster

Marketers, educators, developers, and researchers all count on Prompt Engineering Mastery to stay ahead.

How Prompting Works

Large Language Models, or LLMs, use probability to predict the next word in a sentence. They don’t reason like people do—they just look at what came before and guess what comes next.

Effective prompting leverages:

| Element | Function |

|---|---|

| Context | Defines scope |

| Constraints | Reduces ambiguity |

| Examples | Guides pattern learning |

| Role assignment | Shapes tone |

| Structure | Controls format |

The clearer your intent, the stronger the output.

1–5: Core Professional Techniques (Universal Foundations)

- Elite Role/Persona Prompting Assign hyper-specific, credentialed personas for expert-level consistency. Elaborate Template (Professional Version):

text

<role> You are Dr. Elena Voss, PhD in Computational Linguistics with 15+ years optimizing OpenAI models at leading AI labs. You excel in precise, evidence-based reasoning, zero hallucinations, and executive-level communication. Always prioritize accuracy, cite logical steps when complex, and flag uncertainties. </role> <task> Analyze and respond to the following query with maximum clarity and depth. </task> <constraints> - Output in professional markdown format. - Limit to essential information; be concise yet comprehensive. - Use reasoning_effort: medium for analytical tasks. </constraints> Query: [Your input here]Best for: Advisory, technical, or strategic responses.

- Structured Few-Shot Prompting Teach patterns with high-quality, diverse examples. Elaborate Template:

text

<examples> Example 1: Input: Summarize key trends in AI 2025. Output: {"trends": ["Agentic AI surge", "Multimodal dominance", "Reasoning model adoption"], "impact": "Enterprise ROI up 40%"} Example 2: Input: Analyze code bug. Output: {"issue": "Null pointer", "fix": "Add null check", "severity": "High"} Now apply the same structured JSON output format. </examples> <output_format> Respond ONLY with valid JSON matching this schema: {"key": "value", ...} </output_format> Input: [Your query]Best for: Consistent structured data/JSON outputs.

- Adaptive Chain-of-Thought (Non-Reasoning Models Only) For GPT-4o-style models; skip/minimize on o1/GPT-5. Elaborate Template:

text

<instructions> Solve this complex problem with rigorous, transparent reasoning. First, break it into logical sub-steps. Then, evaluate each step critically. Finally, synthesize the optimal solution. Use delimiters: <step1>, <step2>, etc. </instructions> <query>[Problem here]</query> <final_output> After reasoning, provide only the concise answer in <answer> tags. </final_output> - Zero-Shot Enhanced CoT Lightweight for quick boosts. Elaborate Template:

text

Approach this query methodically: Identify core elements → Outline assumptions → Derive conclusions step-by-step → Validate against edge cases. Provide your final synthesis. Query: [Input] - Multi-Path Self-Consistency Generate variants and converge. Elaborate Template:

text

<process> Generate 4 independent, high-quality reasoning paths for this query. For each path: describe assumptions, steps, and conclusion. Then, evaluate consistency across paths (1-10 scale) and select/vote on the most robust answer. </process> Query: [Input]Best for: Hallucination reduction.

6–10: Advanced Reasoning Techniques for 2026 Models

- Tree of Thoughts (ToT) with Branch EvaluationElaborate Template:

text

<tot_framework> Explore this problem using a Tree of Thoughts structure: - Generate 4 promising reasoning branches from the root query. - For each branch: Expand 2–3 steps deep, evaluate feasibility (pros/cons, score 1-10). - Prune low-scoring branches. - Synthesize the optimal path into a final plan. Use reasoning_effort: high. </tot_framework> Root Query: [Complex planning/problem]Best for: Strategy, multi-step optimization.

- XML/Markdown Scaffolding for ParseabilityElaborate Template:

text

<thinking>Perform detailed internal reasoning here, step by step.</thinking> <verification>Cross-check facts and logic for consistency.</verification> <output>Final response only – no reasoning visible.</output> Task: [Input] - Meta-Prompt Refinement (Optimizer)Elaborate Template:

text

<meta_role> You are an elite Prompt Engineer specializing in 2026 frontier models (GPT-5, o1, Gemini). Optimize prompts for precision, cost-efficiency, and maximal performance. </meta_role> <original_prompt>[Your draft prompt]</original_prompt> <optimization_criteria> - Maximize accuracy and adherence - Minimize token usage - Incorporate model-specific best practices (e.g., developer messages for o1) </optimization_criteria> Output the fully refined, professional prompt. - Reverse/Goal-Backward PromptingElaborate Template:

text

Begin from the ideal end-state: [Describe perfect outcome in detail]. Work backward: What prerequisite conditions must exist? What steps logically precede them? Map a reverse causal chain, then reverse it into a forward execution plan. - hain-of-Verification (CoV)Elaborate Template:

text

For every factual claim in your response: 1. State the claim. 2. Cite verifiable source/logic. 3. Cross-verify with alternative reasoning. 4. Confirm or refute. Only include verified information in final output.

11–17: Cutting-Edge 2026 Professional Hacks

- Developer Messages Alignment (o1/GPT-5 API) Use developer messages per OpenAI spec for chain-of-command.

- Nested Multi-Layer Agentic Prompting High-level goal → Sub-agents → Refinement loop.

- Multi-Perspective Synthesis

text

Analyze from three expert lenses: [Optimistic strategist], [Critical risk assessor], [Neutral data scientist]. Synthesize balanced, actionable recommendation. - Enforced Structured JSON Mode

text

Respond exclusively in valid JSON adhering strictly to this schema: { "summary": string, "details": array, "confidence": number }. No additional text. - Delimiter Mastery & Formatting Use “`triple

- Reasoning Effort Precision Tuning

text

Set reasoning_effort: high for deep analysis; medium for balanced speed/accuracy. - Hybrid Cross-Model Mastery Role + Few-Shot + ToT for GPT-5; Lean/direct for o1/Gemini; Adapt per Gemini 2026 prompt engineering mastery guidelines.

(Alt text: Gemini 2026 AI model prompt engineering diagram.)

Common Mistakes to Avoid

-

❌ Vague prompts

-

❌ No defined output format

-

❌ Ignoring revision loops

-

❌ Overloading with conflicting instructions

-

❌ Skipping role definition

Avoid these pitfalls to maximize ChatGPT optimization.

Effectiveness Comparison Table (2026 Models)

| Technique | Best Models | Complexity | Accuracy Boost | Token Efficiency | Primary Use Case |

|---|---|---|---|---|---|

| Elite Role/Persona | All | Low | Medium-High | High | Domain expertise |

| Structured Few-Shot | GPT-5, Gemini | Medium | High | Medium | JSON/structured data |

| Tree of Thoughts | Reasoning (o1, GPT-5) | High | Very High | Lower | Complex planning |

| Meta-Prompt Optimizer | All | Medium | High | High | Iterative refinement |

| Self-Consistency | All | High | High | Lower | Fact-heavy tasks |

(Alt text: Advanced AI prompt techniques effectiveness bar chart for prompt engineering mastery.)

Prompt Engineering Mastery: Practical Use Cases in 2026

1. SEO Content Creation

-

Keyword clustering

-

Blog structuring

-

Meta optimization

2. Academic Research

-

Summarization

-

Hypothesis testing

-

Literature reviews

3. Business Automation

-

Email drafting

-

Strategy documents

-

Market analysis

4. Coding Assistance

-

Debugging

-

Documentation

-

API explanation

FAQs

1. What is Prompt Engineering Mastery?

It’s the skill of crafting prompts that get the most accurate, relevant responses from AI. Think of it as asking the right questions, the right way.

2. Why does prompt engineering mastery matter in 2026?

AI’s gotten pretty smart, but it still depends on how you talk to it. If your prompt is clear and structured, the results are much better.

3. How do I get better at optimizing ChatGPT?

Try role prompting, run some iteration loops, and layer your context. The more you practice, the sharper your prompts get.

4. Does prompt length really change the output?

Definitely. Short, clear, and well-structured prompts beat long, rambling ones every time.

5. Can prompt engineering mastering help with SEO content?

For sure. It tightens up your structure, helps with keywords, and makes your content feel more authoritative.

6. Is prompt engineering technical?

Not always. At its core, it’s about communication and strategy, not just tech.

Conclusion

By 2026, Prompt Engineering Mastery isn’t just nice to have—it’s a must. If you’re in marketing, development, research, or running your own business, knowing how to talk to AI turns it from a simple tool into a serious advantage.

Use the 17 techniques from earlier. You’ll see better accuracy, more productivity, and a lot more creativity in your results. Start small, keep practicing, and keep improving.

Bottom line? The future goes to people who know how to ask the best questions.

Read More: The 2026 Guide to Prompt Engineering

Mastery Roadmap on masteringopenai.com

Ready to dive in? Head over to masteringopenai.com and kick things off with the basics of prompt engineering. Once you’ve got the hang of that, level up with IBM’s prompt engineering certification frameworks. Try out everything you learn using our prompt generator—it’s a great way to see what really works.

If you want to poke around at your own pace, we’ve got a ton of free prompt engineering resources for 2026 waiting for you.

Prefer to learn by watching? Check out our top pick: “Prompt Engineering Full Course 2026 [FREE].” Seriously, it’s packed with good stuff.

Take these advanced prompts and see how they shake up your AI results. Something catch your eye? Browse our library for more, or drop your results below—we’d love to see what you come up with.